From Cost Visibility to Action: Scaling FinOps Intelligence with Databricks System Tables and Genie

This post walks through the architecture Entrada built around that observation, the Serverless Cost Control Accelerator, and, more importantly, the design principles behind it. Regardless os whether we’re a platform engineer, SRE, or FinOps lead trying to decide where to invest, the principles matter more than the product.

Building Secure, AI-Ready Medical Research Platforms on Databricks

Research organizations need faster, more reliable ways to prepare sensitive data for analysis without loosening their grip on governance and privacy. Across the medical research platforms we’ve built on Databricks, the same patterns keep proving their worth: cleaner ingestion, standardized de-identification, simpler access to research-ready datasets, and a foundation that holds up when analytics and AI ambitions grow. Here’s what we’ve learned about designing these environments well.

Lakebase: The Death of the Siloed Application Database

Every enterprise manages two separate, expensive database systems: OLTP for real-time transactions and OLAP for analytics. The pipeline connecting them is the most fragile thing in the entire stack. Databricks’ Lakebase makes that pipeline optional, offering a strategic opportunity to collapse two stacks into one and finally deliver the near-real-time data that critical business applications need.

Serverless by Workload Shape: Entrada’s Databricks Playbook for Real Price/Performance

Databricks is directionally right to push serverless. Its current guidance recommends serverless for supported workloads because it is the simplest, most reliable option for notebooks, jobs, and Lakeflow Spark Declarative Pipelines, and its compute selection guidance recommends serverless for most automated workloads while steering SQL tasks toward serverless SQL warehouses.

Fraud Detection at Scale: What It Really Takes

Fraud detection at scale is not just about catching suspicious activity faster. It is about building the data, AI, and governance foundation needed to detect risk reliably, explain decisions, and stay cost-efficient.

Monitoring ML Models in Production with Databricks

Most ML models do not break in development. They break quietly in production, when data changes, performance drifts, and no one notices until business trust is already slipping. That is why monitoring is not an afterthought. It is one of the foundations of enterprise AI.

True CI/CD for the Lakehouse: Infrastructure as Code (IaC) & DABs

There is a conversation I have had more times than I can count. A client tells me their team “already has CI/CD.” When I ask them to walk me through it, the answer usually sounds like this: a developer runs a notebook to completion, exports it, uploads it to a shared folder, and notifies the production team via Slack to “pull the latest version.” That is not CI/CD. That is a deployment ceremony wrapped in good intentions.

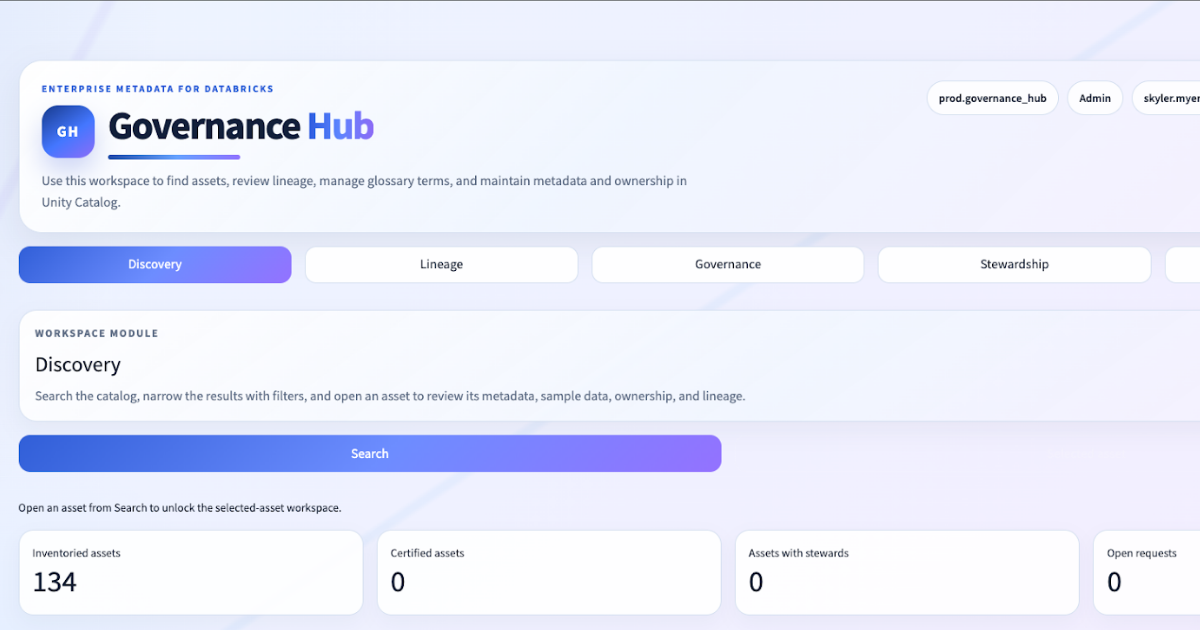

The Next Generation Data Governance Experience In Databricks

At Entrada, we spend a lot of time in environments where the governance conversation sounds the same.

The client already has Databricks. They already have Unity Catalog. They already have tables, schemas, comments, tags, and lineage. What they do not have is a governance operating surface that feels like a true metadata product: search-first discovery, entity-centric governance workflows, opinionated lineage workspaces, stewardship controls, and a place where governance activity actually happens.

Hidden Compute Costs in Enterprise Migrations: Why Execution Model Matters

Your Databricks migration pipeline is likely paying more for cluster lifecycle overhead than for actual data processing. That hidden penalty – the latency tax – emerges when every notebook invocation spins up its own cluster from scratch.

From DAX Filters to Data Contracts: Migrating Power BI Security to Unity Catalog

The security review took longer than the migration itself. I was auditing a client’s Power BI environment: 47 static RLS roles, each with its own DAX filter expression, each maintained by a different team, none of them connected to the data layer. When an analyst queried the same tables directly from a notebook, the filters simply didn’t apply. Two security models, one dataset, zero consistency.

Race to the Lakehouse

Race to the Lakehouse  AI + Data Maturity Assessment

AI + Data Maturity Assessment  Unity Catalog

Unity Catalog  Rapid GenAI

Rapid GenAI  Modern Data Connectivity

Modern Data Connectivity  Gatehouse Security

Gatehouse Security  Health Check

Health Check  Sample Use Case Library

Sample Use Case Library